The first edition was published in 2020, and with the pace of change being as brutal and unforgiving as it is, I started making notes for the second edition within a month of finishing the manuscript. The overall structure has remained the same, but I go far deeper into the different topics in the 2nd edition. There are also more visuals and a couple of new topics. This series of articles provides a summary of each of the chapters with some personal afterthoughts. Serverless Beyond the Buzzword 2nd edition can be purchased here: https://link.springer.com/book/10.1007/978-1-4842-8761-3

Written article continues below the video

The first chapter is intended to establish a basic understanding of my definition of serverless. Jargon and technical terms are minimal or explained.

Serverless is a means to create software that will run on a server fully managed by a cloud provider instead of a server managed by your organisation, and we should only be paying for actual utilisation, not idle time or availability. What it really means is that application teams can focus on service configuration and application code – in short, business value, as opposed to plumbing.

The word “Serverless” is a bit of a misnomer as there actually are servers involved in storing and running the code. It is called Serverless because the developers no longer need to manage, update, or maintain the underlying servers, operating systems, or software.

For me to consider a service to be Serverless, the following should apply:

- A significant portion of the service is managed by the cloud provider – including the operating system, most software, and any common dependencies. We also expect redundancy, scalability, and, to some degree, security to be managed and automated.

- We pay only for actual usage, for example, the number of requests, the amount of storage, or the duration for which a service is actually used. It would not be considered Serverless if we had to pay for idle time.

- Some services bill for idle time; however, due to their nature or our use case, they can be turned off automatically when not needed and turned back on again automatically when required. This approach helps minimise being billed for idle time and so creates a Serverless experience. However, if this approach compromises the security of the solution in any way or makes it unstable, then it should not be considered Serverless.

A financial example is also covered in Chapter 1. This example focuses on a low-use application, a registration form that is used 20 times a day. Processing the form submission takes 1 second. If we run a small EC2 instance hosting this form and its processing, the cost would be roughly $1.11 per day. If we take a serverless approach, the cost would be roughly $0.000067 per day.

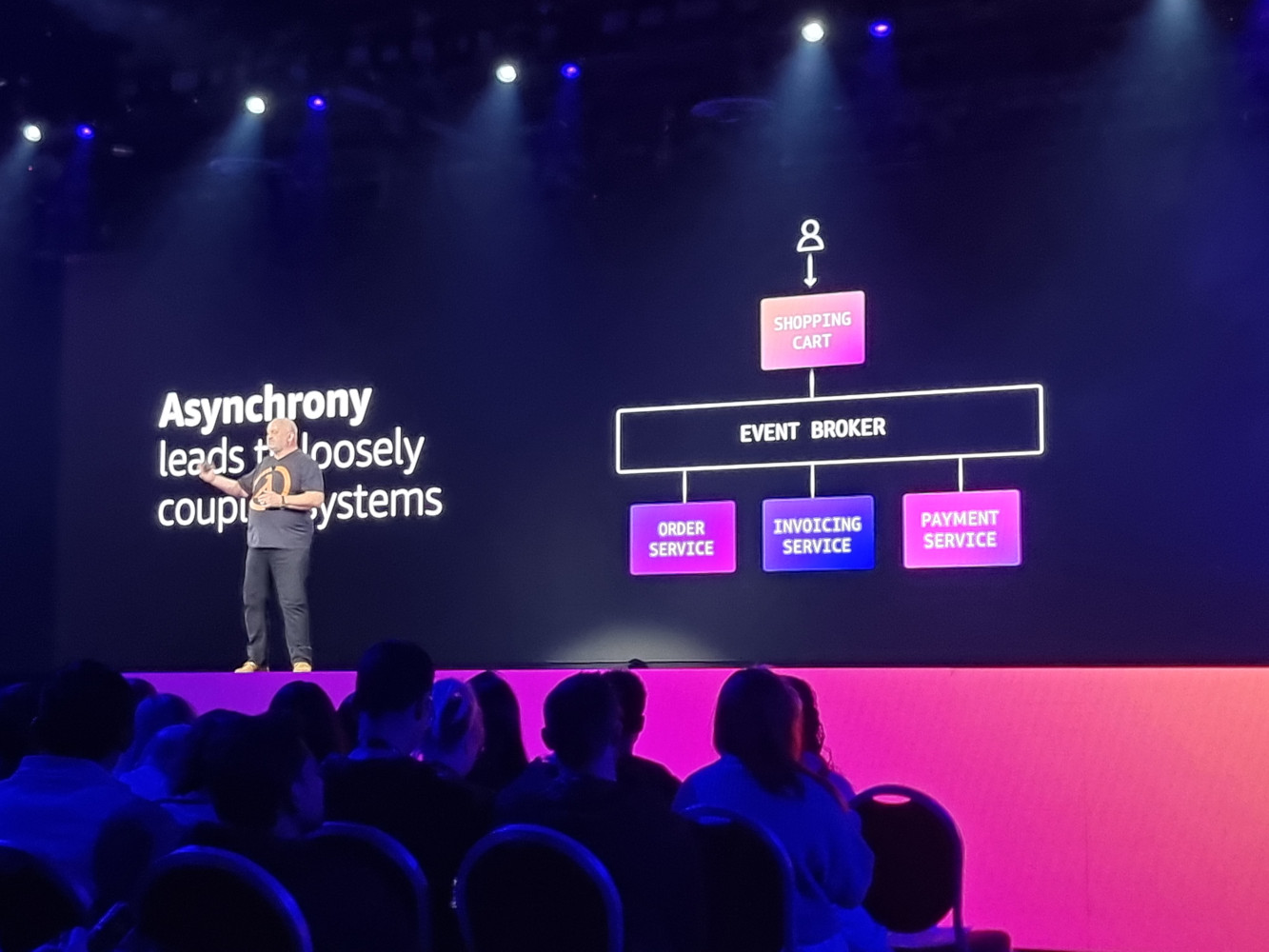

Microservices

While not synonymous with Serverless, microservices are often found in this architecture. Chapter 1 introduces microservices, explaining what they are without getting too technical.

Microservices are like mini-applications that each handle a specific feature or set of closely related features. Microservices are able to work together to meet an application’s complete set of requirements. Microservices are what we call loosely coupled, meaning that they can function independently of each other. If one microservice fails, the other microservices can still perform their respective tasks.

On AWS, microservices will typically run in the Lambda service. This service takes care of almost everything; you only need to upload your microservice code and configure the integrations and access permissions.

Following microservices, Chapter 1 provides a simple architecture example and walks through the different components. Several key aspects of Serverless are called out in the design.

In the example, the API Service (AWS API Gateway) takes care of validating and formatting any user input, and it will protect us from attacks such as SQL injection, so we don’t need to develop those capabilities in our microservices.

An advantage of microservices is that they are very reusable. A single microservice, such as a generic image processor, can be used by multiple projects and managed centrally as a shared service.

A shared microservice does not necessarily need access to potentially sensitive application resources. An application can pass a task to a microservice, which processes it and returns a result to the application.

Due to the distributed nature of Serverless, each microservice can have its own set of unique permissions. For example, a user profile microservice can have exclusive access to a sensitive personal data database.

Challenges and Benefits

Chapter 1 provides a refreshed version of the key challenges and benefits of Serverless that were in the first edition.

The challenges that are relevant today include:

- Vendor lock-in (but does that really matter?)

- Finding Talent (especially with serverless experience)

- Less control (it's ok to let go)

- SLAs of latest services (research and try before deploying to production...)

- Latency (definitely far better than two years ago, but still something to keep an eye on)

- Unlimited scaling (without limits can be costly)

- Difficult to estimate operational costs

- Cloud management (traditional approaches will not be productive)

- Service limits (read the manual before using a service)

And relevant benefits include:

- Near-zero wastage (same as before, but better)

- Reduced scope of responsibility (focus on where you can add value)

- Accurate cost estimation (while difficult, it can be very accurate)

- Highly reusable (boosting productivity)

- Better security (those fine-grained permissions)

- Naturally supports agility and good DevOps practices)

- Easier to manage time (lots of small projects)

- Highly scalable and fast (and safe when suitable limits are configured)

- Significantly lower maintenance costs (ideally, zero maintenance)

Chapter 1 also delves into the history of Serverless, types of suitable projects, some public case studies, and common objections.

Check out the book's mini site for more information and ordering here: https://serverlessbook.co